Quickstart

Let's build!

Get up and running with the Telekinesis Agentic Skill Library and run your first end-to-end example in just a few minutes.

The Telekinesis Agentic Skill Library is published on PyPI as telekinesis-ai. In this guide, Skills refer to the reusable capabilities exposed by the library, such as segmentation, object detection, point cloud filtering, camera capture, and kinematics.

Quick Install

Install the Telekinesis Agentic Skill Library from PyPI. Requires Python 3.11 or 3.12:

pip install telekinesis-aiCreate a TELEKINESIS_API_KEY at the Telekinesis Platform and export it to your environment.

Pick a Starter

Six entry points - pick the one that matches your stack. Each takes only a couple of minutes and ends with a working Skill result shown in Rerun.

telekinesis-examples and run any of dozens of pre-built Skills across vision, robotics, and 3D. Try It Now: Foreground Segmentation (Cornea)

This starter downloads a sample image, runs the BiRefNet foreground segmenter, and visualizes the result in a Rerun viewer - a fast end-to-end check that the vision side of your install is healthy. See the Skill reference for the behavior.

Show install, script, and run

1. Install the runtime dependencies

In your active telekinesis environment, install the visualization library:

# Rerun is the 3D/2D viewer the script opens so you can see the image and mask side by side.

pip install rerun-sdk==0.31.22. Save the script

Create a file called quickstart_birefnet_example.py anywhere on your machine and paste the following:

"""Telekinesis quickstart: extract the foreground from an image with BiRefNet.

Loads a sample image from a public URL, runs BiRefNet foreground

segmentation, and visualizes the input alongside the predicted mask

in a Rerun viewer.

Run as a script - python quickstart_birefnet_example.py

"""

import cv2

import numpy as np

import requests

import rerun as rr

import rerun.blueprint as rrb

from loguru import logger

from datatypes import datatypes

from telekinesis import cornea, pupil

# Public sample image shipped by Telekinesis (you can swap this for a local file later).

IMAGE_URL = (

"https://telekinesis-public-assets.s3.us-east-1.amazonaws.com/screws_standing.jpg"

)

def main() -> None:

# Download and decode JPEG bytes to BGR, then convert to RGB for datatypes.Image

response = requests.get(IMAGE_URL, timeout=60)

response.raise_for_status()

image_bgr = cv2.imdecode(

np.frombuffer(response.content, dtype=np.uint8), cv2.IMREAD_COLOR,

)

image_bgr = datatypes.Image(image=image_bgr, color_model="BGR")

image = pupil.convert_image_color_space(image_bgr, source_color_space="BGR", target_color_space="RGB")

# Wrap pixels in Telekinesis's typed Image object.

logger.success(f"Loaded image from {IMAGE_URL}")

# Skill call: BiRefNet returns an annotation object we convert to a plain dict for logging

result = cornea.segment_image_using_foreground_birefnet(

image=image,

mask_threshold=0,

)

annotation = result.to_dict()

logger.success("BiRefNet foreground segmentation complete.")

# Opens the Rerun viewer (spawn=True).

rr.init("telekinesis_birefnet_quickstart", spawn=True)

# Log input image and segmented image as separate entities.

rr.log("input", rr.Image(image.to_numpy()))

rr.log("segmented_mask", rr.Image(annotation["labeled_mask"]))

if __name__ == "__main__":

main()3. Run it

python quickstart_birefnet_example.pyA Rerun window will open with two panels side by side: the original image on the left and the BiRefNet foreground mask on the right.

If this works, your installation is healthy and ready for real workloads. The rest of the Quickstart shows how to discover and run the full library of examples.

Try It Now: Voxel Downsampling (Vitreous)

This starter downloads a sample PLY point cloud, runs filter_point_cloud_using_voxel_downsampling from Vitreous, and shows the input and filtered cloud in a Rerun viewer. See the Skill reference for the behavior.

Show install, script, and run

1. Install the runtime dependencies

In your active telekinesis environment, install the visualization library:

pip install rerun-sdk==0.31.22. Save the script

Create a file called quickstart_voxel_downsample_example.py anywhere on your machine and paste the following:

"""

Telekinesis quickstart: downsample a point cloud with voxel filtering.

Loads a sample point cloud from a public URL, runs Voxel downsampling,

and visualizes the input and the filtered point cloud

in a Rerun viewer.

Run as a script - python quickstart_voxel_downsample_example.py

"""

from pathlib import Path

import requests

import rerun as rr

from loguru import logger

from datatypes import io

from telekinesis import vitreous

# Public sample point cloud shipped by Telekinesis (you can swap this for a local file later).

POINT_CLOUD_URL = (

"https://telekinesis-public-assets.s3.us-east-1.amazonaws.com/point_clouds/"

"can_vertical_1_subtracted.ply"

)

# Written under the current working directory when you run the script.

POINT_CLOUD_PATH = Path("can_vertical_1_subtracted.ply")

def main() -> None:

# Download PLY bytes and write a local file for io.load_point_cloud

response = requests.get(POINT_CLOUD_URL, timeout=60)

response.raise_for_status()

POINT_CLOUD_PATH.write_bytes(response.content)

# Typed PointCloud: positions (N,3) and optional colors - this asset includes colors.

point_cloud = io.load_point_cloud(filepath=str(POINT_CLOUD_PATH))

logger.success(f"Loaded point cloud with {len(point_cloud.positions)} points")

# One Skill call: points in the same voxel cell are merged. Smaller voxel_size keeps more detail.

filtered_point_cloud = vitreous.filter_point_cloud_using_voxel_downsampling(

point_cloud=point_cloud,

voxel_size=0.005,

)

logger.success("Voxel downsampling complete.")

# Opens the Rerun viewer (spawn=True).

rr.init("telekinesis_voxel_downsampling_quickstart", spawn=True)

# Log full-resolution cloud and filtered cloud as separate entities in the 3D view.

rr.log(

"input_point_cloud",

rr.Points3D(

positions=point_cloud.positions,

colors=point_cloud.colors,

),

)

rr.log(

"filtered_point_cloud",

rr.Points3D(

positions=filtered_point_cloud.positions,

colors=filtered_point_cloud.colors,

),

)

if __name__ == "__main__":

main()3. Run it

python quickstart_voxel_downsample_example.pyIf this works, your Vitreous stack is healthy. The rest of the Quickstart shows how to discover and run the full library of examples (including the same Vitreous Skill via vitreous_examples.py once you clone telekinesis-examples).

Try It Now: Capture from a Webcam (Medulla)

This starter connects to a USB webcam, captures a single color image, and visualizes it in Rerun viewer - a fast way to confirm the Medulla hardware communication skills are wired up correctly. See the Skill reference for the behavior.

Hardware required

You need a USB webcam connected to your machine. A built-in laptop camera works too - camera_id=0 selects the default camera.

Show install, script, and run

1. Install the runtime dependencies

In your active telekinesis environment, install the Medulla extras and the visualization dependencies:

pip install "telekinesis-ai[medulla]" rerun-sdk==0.31.22. Save the script

Create a file called quickstart_webcam_example.py anywhere on your machine and paste the following:

"""Telekinesis quickstart: capture a single frame from a USB webcam.

Connects to the default webcam, captures one color frame, and visualizes

it in a Rerun viewer.

Run as a script - python quickstart_webcam_example.py

"""

import rerun as rr

from loguru import logger

from telekinesis.medulla.cameras import webcam

def main() -> None:

# Opens the Rerun viewer (spawn=True).

rr.init("telekinesis_medulla_quickstart", spawn=True)

# camera_id=0 is usually the default laptop camera or first USB webcam.

camera = webcam.Webcam(name="my_webcam_camera", camera_id=0)

try:

camera.connect()

# Skill call: Capture one color image as an HWC uint8 NumPy array.

image = camera.capture_color_image()

height, width = image.shape[:2]

logger.success(f"Captured a {width}x{height} frame")

# Log under entity path "webcam" so it appears in the spatial view.

rr.log("webcam", rr.Image(image))

except Exception as exc:

logger.error(f"Failed to capture frame: {exc!r}")

finally:

camera.disconnect()

if __name__ == "__main__":

main()3. Run it

python quickstart_webcam_example.pyA Rerun window will open showing a single frame captured from your webcam. If you see your face (or whatever your camera is pointing at), Medulla is correctly installed and able to talk to your hardware.

Other cameras

The same pattern works for IDS and Intel RealSense cameras with the matching extras (telekinesis-ai[medulla-ids] or telekinesis-ai[medulla-realsense]). See the Medulla overview for the full list of supported vendors.

Try It Now: Set Cartesian Pose (Synapse)

This starter drives a manipulator along a closed Cartesian trajectory- a circle in the YZ plane around its home TCP pose, and visualizes the robot and TCP path live in Rerun.

Pick a tab to see that manipulator!

Show install, script, and run

1. Install the runtime dependencies

One-time setup for Synapse

Synapse depends on telekinesis-urdfs for robot model data. It must be installed before the package. The URDFs repository is large and the build may take several minutes the first time.

In your active telekinesis environment, install the Synapse additionals and the visualization dependencies:

git clone --depth 1 https://github.com/telekinesis-ai/telekinesis-urdfs.git

cd telekinesis-urdfs

pip install .

cd ..

pip install "telekinesis-ai[synapse]" rerun-sdk==0.31.22. Save the script

Pick the brand you want to try. Each tab is a standalone, runnable offline script that imports only that brand's manipulators module.

"""

Telekinesis quickstart: drive a Universal Robots robot along a YZ-plane circle via Cartesian pose targets.

No Hardware Required - runs entirely in software with live visualization in Rerun.

Traces a closed circle with radius 0.20m in the YZ plane around the home TCP pose. The TCP

path is drawn live as a connected line with a hue gradient (older

segments blue, newest red).

Install:

pip install rerun-sdk==0.31 # tested on 0.31

Run:

python examples/py/github_examples/universal_robots_set_cartesian_pose_with_visualization.py

"""

import colorsys

import time

import numpy as np

import rerun as rr

from loguru import logger

from telekinesis.synapse.robots.manipulators import universal_robots

def visualize_robot(robot, static_meshes: bool = False) -> None:

"""Log per-link transforms to rerun, plus the static meshes on the first call."""

if static_meshes:

for link, m in robot.get_visual_meshes_data().items():

if m["vertices"] is None:

continue

kwargs: dict = {

"vertex_positions": m["vertices"],

"triangle_indices": m["triangles"],

"vertex_normals": m["vertex_normals"],

}

if m["vertex_colors"] is not None:

kwargs["vertex_colors"] = m["vertex_colors"]

else:

kwargs["albedo_factor"] = m["color"] or [179, 179, 179]

rr.log(f"/robot/{link}", rr.Mesh3D(**kwargs), static=True)

for link, T in robot.get_visual_mesh_transforms().items():

rr.log(f"/robot/{link}", rr.Transform3D(translation=T[:3, 3], mat3x3=T[:3, :3]))

def visualize_path(path: list[list[float]], entity: str = "/trajectory") -> None:

"""Draw the TCP path as connected segments with a blue→red hue gradient."""

if len(path) < 2:

return

segments = [[path[i], path[i + 1]] for i in range(len(path) - 1)]

n = max(1, len(segments) - 1)

colors = [

[int(c * 255) for c in colorsys.hsv_to_rgb((1.0 - i / n) * (240.0 / 360.0), 1.0, 1.0)]

for i in range(len(segments))

]

rr.log(entity, rr.LineStrips3D(segments, colors=colors, radii=0.003))

def main():

"""Trace a YZ-plane circle around the UR16E's home TCP pose, visualized in rerun."""

# Visualization tick rate

hz = 30

dt = 1.0 / hz

# Circle motion parameters (radius in meters, number of segments)

radius = 0.20

n_steps = 200

# Create robot

robot = universal_robots.UniversalRobotsUR16E()

# Initialize rerun and log static meshes

rr.init(f"telekinesis_synapse_{type(robot).__name__}", spawn=True)

visualize_robot(robot, static_meshes=True)

time.sleep(2.0)

# Extract home pose

home_pose = robot.get_cartesian_pose()

logger.info(f"Tracing circle of radius {radius:.3f} m in YZ plane ({n_steps} steps)")

# Robot motion: draw circle in YZ plane, visualize robot and TCP path

path: list[list[float]] = []

for step in range(n_steps + 1):

theta = 2.0 * np.pi * step / n_steps

# Circle in the YZ plane, offset so it "kisses" the home pose at theta=0.

pose = home_pose.copy()

pose[1] = home_pose[1] + radius * np.cos(theta) - radius

pose[2] = home_pose[2] + radius * np.sin(theta)

# Move the robot

try:

robot.set_cartesian_pose(pose)

except ValueError:

continue # outside reach / joint limits

# Visualize robot and path

visualize_robot(robot)

actual = robot.get_cartesian_pose()

path.append([float(actual[0]), float(actual[1]), float(actual[2])])

visualize_path(path)

# Sleep to maintain a consistent visualization tick rate

time.sleep(dt)

if __name__ == "__main__":

main()"""

Telekinesis quickstart: drive a Fanuc robot along a YZ-plane circle via Cartesian pose targets.

No Hardware Required - runs entirely in software with live visualization in Rerun.

Traces a closed circle of radius 0.20m in the YZ plane around the home TCP pose. The TCP

path is drawn live as a connected line with a hue gradient (older

segments blue, newest red).

Install:

pip install rerun-sdk==0.31 # tested on 0.31

Run:

python examples/py/github_examples/fanuc_set_cartesian_pose_with_visualization.py

"""

import colorsys

import time

import numpy as np

import rerun as rr

from loguru import logger

from telekinesis.synapse.robots.manipulators import fanuc

def visualize_robot(robot, static_meshes: bool = False) -> None:

"""Log per-link transforms to rerun, plus the static meshes on the first call."""

# Log static meshes once

if static_meshes:

for link, m in robot.get_visual_meshes_data().items():

if m["vertices"] is None:

continue

kwargs: dict = {

"vertex_positions": m["vertices"],

"triangle_indices": m["triangles"],

"vertex_normals": m["vertex_normals"],

}

if m["vertex_colors"] is not None:

kwargs["vertex_colors"] = m["vertex_colors"]

else:

kwargs["albedo_factor"] = m["color"] or [179, 179, 179]

rr.log(f"/robot/{link}", rr.Mesh3D(**kwargs), static=True)

# Log per-link transforms on every update

for link, T in robot.get_visual_mesh_transforms().items():

rr.log(f"/robot/{link}", rr.Transform3D(translation=T[:3, 3], mat3x3=T[:3, :3]))

def visualize_path(path: list[list[float]], entity: str = "/trajectory") -> None:

"""Draw the TCP path as connected segments with a blue→red hue gradient."""

if len(path) < 2:

return

segments = [[path[i], path[i + 1]] for i in range(len(path) - 1)]

n = max(1, len(segments) - 1)

colors = [

[int(c * 255) for c in colorsys.hsv_to_rgb((1.0 - i / n) * (240.0 / 360.0), 1.0, 1.0)]

for i in range(len(segments))

]

rr.log(entity, rr.LineStrips3D(segments, colors=colors, radii=0.003))

def main():

"""Trace a YZ-plane circle around the Fanuc's home TCP pose, visualized in rerun."""

# Frequency to update the visualization (Hz)

hz = 30

dt = 1.0 / hz

# Radius of the circle to trace (meters)

radius = 0.2

n_steps = 200

# Create robot

robot = fanuc.FanucM10IA()

# Initialize rerun and log static meshes

rr.init(f"telekinesis_synapse_{type(robot).__name__}", spawn=True)

visualize_robot(robot, static_meshes=True)

time.sleep(2.0)

# Get home pose (default configuration)

home_pose = robot.get_cartesian_pose()

logger.info(f"Tracing circle of radius {radius:.3f} m in YZ plane ({n_steps} steps)")

# Robot motion: draw circle in YZ plane, visualize robot and TCP path

path: list[list[float]] = []

for step in range(n_steps + 1):

theta = 2.0 * np.pi * step / n_steps

# Circle in the YZ plane, offset so it "kisses" the home pose at theta=0.

pose = home_pose.copy()

pose[1] = home_pose[1] + radius * np.cos(theta) - radius

pose[2] = home_pose[2] + radius * np.sin(theta)

# Move the robot

try:

robot.set_cartesian_pose(pose)

except ValueError:

continue # outside reach / joint limits

# Visualize robot and path

visualize_robot(robot)

actual = robot.get_cartesian_pose()

path.append([float(actual[0]), float(actual[1]), float(actual[2])])

visualize_path(path)

# Sleep to maintain a consistent visualization rate.

time.sleep(dt)

if __name__ == "__main__":

main()"""

Telekinesis quickstart: drive a Neura Robotics robot along a YZ-plane circle via Cartesian pose targets.

No Hardware Required - runs entirely in software with live visualization in Rerun.

Traces a closed circle of radius 0.30m in the YZ plane around the home TCP pose. The TCP

path is drawn live as a connected line with a hue gradient (older

segments blue, newest red).

Install:

pip install rerun-sdk==0.31 # tested on 0.31

Run:

python examples/py/github_examples/neura_robotics_set_cartesian_pose_with_visualization.py

"""

import colorsys

import time

import numpy as np

import rerun as rr

from loguru import logger

from telekinesis.synapse.robots.manipulators import neura_robotics

def visualize_robot(robot, static_meshes: bool = False) -> None:

"""Log per-link transforms to rerun, plus the static meshes on the first call."""

# Log static meshes once

if static_meshes:

for link, m in robot.get_visual_meshes_data().items():

if m["vertices"] is None:

continue

kwargs: dict = {

"vertex_positions": m["vertices"],

"triangle_indices": m["triangles"],

"vertex_normals": m["vertex_normals"],

}

if m["vertex_colors"] is not None:

kwargs["vertex_colors"] = m["vertex_colors"]

else:

kwargs["albedo_factor"] = m["color"] or [179, 179, 179]

rr.log(f"/robot/{link}", rr.Mesh3D(**kwargs), static=True)

# Log per-link transforms on every update

for link, T in robot.get_visual_mesh_transforms().items():

rr.log(f"/robot/{link}", rr.Transform3D(translation=T[:3, 3], mat3x3=T[:3, :3]))

def visualize_path(path: list[list[float]], entity: str = "/trajectory") -> None:

"""Draw the TCP path as connected segments with a blue→red hue gradient."""

if len(path) < 2:

return

segments = [[path[i], path[i + 1]] for i in range(len(path) - 1)]

n = max(1, len(segments) - 1)

colors = [

[int(c * 255) for c in colorsys.hsv_to_rgb((1.0 - i / n) * (240.0 / 360.0), 1.0, 1.0)]

for i in range(len(segments))

]

rr.log(entity, rr.LineStrips3D(segments, colors=colors, radii=0.003))

def main():

"""Trace a YZ-plane circle around the LARA8's home TCP pose, visualized in rerun."""

# Frequency to update the visualization (Hz)

hz = 30

dt = 1.0 / hz

# Radius of the circle to trace (meters)

radius = 0.30

n_steps = 200

# Create robot

robot = neura_robotics.NeuraRoboticsMAiRA7M()

# Initialize rerun and log static meshes

rr.init(f"telekinesis_synapse_{type(robot).__name__}", spawn=True)

visualize_robot(robot, static_meshes=True)

time.sleep(2.0)

# Get home pose (default configuration)

home_pose = robot.get_cartesian_pose()

logger.info(f"Tracing circle of radius {radius:.3f} m in YZ plane ({n_steps} steps)")

# Robot motion: draw circle in YZ plane, visualize robot and TCP path

path: list[list[float]] = []

for step in range(n_steps + 1):

theta = 2.0 * np.pi * step / n_steps

# Circle in the YZ plane, offset so it "kisses" the home pose at theta=0.

pose = home_pose.copy()

pose[1] = home_pose[1] + radius * np.cos(theta) - radius

pose[2] = home_pose[2] + radius * np.sin(theta)

# Move the robot

try:

robot.set_cartesian_pose(pose)

except ValueError:

continue # outside reach / joint limits

# Visualize robot and path

visualize_robot(robot)

actual = robot.get_cartesian_pose()

path.append([float(actual[0]), float(actual[1]), float(actual[2])])

visualize_path(path)

# Sleep to maintain a consistent visualization rate.

time.sleep(dt)

if __name__ == "__main__":

main()"""

Telekinesis quickstart: drive an ABB robot along a YZ-plane circle via Cartesian pose targets.

No Hardware Required - runs entirely in software with live visualization in Rerun.

Traces a closed circle of radius 0.5m in the YZ plane around the home TCP pose. The TCP

path is drawn live as a connected line with a hue gradient (older

segments blue, newest red).

Install:

pip install rerun-sdk==0.31 # tested on 0.31

Run:

python examples/py/github_examples/abb_set_cartesian_pose_with_visualization.py

"""

import colorsys

import time

import numpy as np

import rerun as rr

from loguru import logger

from telekinesis.synapse.robots.manipulators import abb

def visualize_robot(robot, static_meshes: bool = False) -> None:

"""Log per-link transforms to rerun, plus the static meshes on the first call."""

# Log static meshes once

if static_meshes:

for link, m in robot.get_visual_meshes_data().items():

if m["vertices"] is None:

continue

kwargs: dict = {

"vertex_positions": m["vertices"],

"triangle_indices": m["triangles"],

"vertex_normals": m["vertex_normals"],

}

if m["vertex_colors"] is not None:

kwargs["vertex_colors"] = m["vertex_colors"]

else:

kwargs["albedo_factor"] = m["color"] or [179, 179, 179]

rr.log(f"/robot/{link}", rr.Mesh3D(**kwargs), static=True)

# Log per-link transforms on every update

for link, T in robot.get_visual_mesh_transforms().items():

rr.log(f"/robot/{link}", rr.Transform3D(translation=T[:3, 3], mat3x3=T[:3, :3]))

def visualize_path(path: list[list[float]], entity: str = "/trajectory") -> None:

"""Draw the TCP path as connected segments with a blue→red hue gradient."""

if len(path) < 2:

return

segments = [[path[i], path[i + 1]] for i in range(len(path) - 1)]

n = max(1, len(segments) - 1)

colors = [

[int(c * 255) for c in colorsys.hsv_to_rgb((1.0 - i / n) * (240.0 / 360.0), 1.0, 1.0)]

for i in range(len(segments))

]

rr.log(entity, rr.LineStrips3D(segments, colors=colors, radii=0.003))

def main():

"""Trace a YZ-plane circle around the ABB's home TCP pose, visualized in rerun."""

# Frequency to update the visualization (Hz)

hz = 30

dt = 1.0 / hz

# Radius of the circle to trace (meters)

radius = 0.5

n_steps = 200

# Create robot

robot = abb.AbbIRB7600150350()

# Initialize rerun and log static meshes

rr.init(f"telekinesis_synapse_{type(robot).__name__}", spawn=True)

visualize_robot(robot, static_meshes=True)

time.sleep(2.0)

# Get home pose (default configuration)

home_pose = robot.get_cartesian_pose()

logger.info(f"Tracing circle of radius {radius:.3f} m in YZ plane ({n_steps} steps)")

# Robot motion: draw circle in YZ plane, visualize robot and TCP path

path: list[list[float]] = []

for step in range(n_steps + 1):

theta = 2.0 * np.pi * step / n_steps

# Circle in the YZ plane, offset so it "kisses" the home pose at theta=0.

pose = home_pose.copy()

pose[1] = home_pose[1] + radius * np.cos(theta) - radius

pose[2] = home_pose[2] + radius * np.sin(theta)

# Move the robot

try:

robot.set_cartesian_pose(pose)

except ValueError:

continue # outside reach / joint limits

# Visualize robot and path

visualize_robot(robot)

actual = robot.get_cartesian_pose()

path.append([float(actual[0]), float(actual[1]), float(actual[2])])

visualize_path(path)

# Sleep to maintain a consistent visualization rate.

time.sleep(dt)

if __name__ == "__main__":

main()"""

Telekinesis quickstart: drive a Franka robot along a YZ-plane circle via Cartesian pose targets.

No Hardware Required - runs entirely in software with live visualization in Rerun.

Traces a closed circle of radius 0.10m in the YZ plane around the home TCP pose. The TCP

path is drawn live as a connected line with a hue gradient (older

segments blue, newest red).

Install:

pip install rerun-sdk==0.31 # tested on 0.31

Run:

python examples/py/github_examples/franka_robotics_set_cartesian_pose_with_visualization.py

"""

import colorsys

import time

import numpy as np

import rerun as rr

from loguru import logger

from telekinesis.synapse.robots.manipulators import franka_robotics

def visualize_robot(robot, static_meshes: bool = False) -> None:

"""Log per-link transforms to rerun, plus the static meshes on the first call."""

# Log static meshes once

if static_meshes:

for link, m in robot.get_visual_meshes_data().items():

if m["vertices"] is None:

continue

kwargs: dict = {

"vertex_positions": m["vertices"],

"triangle_indices": m["triangles"],

"vertex_normals": m["vertex_normals"],

}

if m["vertex_colors"] is not None:

kwargs["vertex_colors"] = m["vertex_colors"]

else:

kwargs["albedo_factor"] = m["color"] or [179, 179, 179]

rr.log(f"/robot/{link}", rr.Mesh3D(**kwargs), static=True)

# Log per-link transforms on every update

for link, T in robot.get_visual_mesh_transforms().items():

rr.log(f"/robot/{link}", rr.Transform3D(translation=T[:3, 3], mat3x3=T[:3, :3]))

def visualize_path(path: list[list[float]], entity: str = "/trajectory") -> None:

"""Draw the TCP path as connected segments with a blue→red hue gradient."""

if len(path) < 2:

return

segments = [[path[i], path[i + 1]] for i in range(len(path) - 1)]

n = max(1, len(segments) - 1)

colors = [

[int(c * 255) for c in colorsys.hsv_to_rgb((1.0 - i / n) * (240.0 / 360.0), 1.0, 1.0)]

for i in range(len(segments))

]

rr.log(entity, rr.LineStrips3D(segments, colors=colors, radii=0.003))

def main():

"""Trace a YZ-plane circle around the Panda's home TCP pose, visualized in rerun."""

# Frequency to update the visualization (Hz)

hz = 20

dt = 1.0 / hz

# Radius of the circle to trace (meters)

radius = 0.10

n_steps = 200

# Create robot

robot = franka_robotics.FrankaRoboticsPanda()

# Initialize rerun and log static meshes

rr.init(f"telekinesis_synapse_{type(robot).__name__}", spawn=True)

visualize_robot(robot, static_meshes=True)

time.sleep(2.0)

# Get home pose (default configuration)

home_pose = robot.get_cartesian_pose()

logger.info(f"Tracing circle of radius {radius:.3f} m in YZ plane ({n_steps} steps)")

# Robot motion: draw circle in YZ plane, visualize robot and TCP path

path: list[list[float]] = []

for step in range(n_steps + 1):

theta = 2.0 * np.pi * step / n_steps

# Circle in the YZ plane, offset so it "kisses" the home pose at theta=0.

pose = home_pose.copy()

pose[1] = home_pose[1] + radius * np.cos(theta) - radius

pose[2] = home_pose[2] + radius * np.sin(theta)

# Move the robot

try:

robot.set_cartesian_pose(pose)

except ValueError:

continue # outside reach / joint limits

# Visualize robot and path

visualize_robot(robot)

actual = robot.get_cartesian_pose()

path.append([float(actual[0]), float(actual[1]), float(actual[2])])

visualize_path(path)

# Sleep to maintain a consistent visualization rate.

time.sleep(dt)

if __name__ == "__main__":

main()"""

Telekinesis quickstart: drive a KUKA robot along a YZ-plane circle via Cartesian pose targets.

No Hardware Required - runs entirely in software with live visualization in Rerun.

Traces a closed circle of radius 0.50m in the YZ plane around the home TCP pose. The TCP

path is drawn live as a connected line with a hue gradient (older

segments blue, newest red).

Install:

pip install rerun-sdk==0.31 # tested on 0.31

Run:

python examples/py/github_examples/kuka_set_cartesian_pose_with_visualization.py

"""

import colorsys

import time

import numpy as np

import rerun as rr

from loguru import logger

from telekinesis.synapse.robots.manipulators import kuka

def visualize_robot(robot, static_meshes: bool = False) -> None:

"""Log per-link transforms to rerun, plus the static meshes on the first call."""

# Log static meshes once

if static_meshes:

for link, m in robot.get_visual_meshes_data().items():

if m["vertices"] is None:

continue

kwargs: dict = {

"vertex_positions": m["vertices"],

"triangle_indices": m["triangles"],

"vertex_normals": m["vertex_normals"],

}

if m["vertex_colors"] is not None:

kwargs["vertex_colors"] = m["vertex_colors"]

else:

kwargs["albedo_factor"] = m["color"] or [179, 179, 179]

rr.log(f"/robot/{link}", rr.Mesh3D(**kwargs), static=True)

# Log per-link transforms on every update

for link, T in robot.get_visual_mesh_transforms().items():

rr.log(f"/robot/{link}", rr.Transform3D(translation=T[:3, 3], mat3x3=T[:3, :3]))

def visualize_path(path: list[list[float]], entity: str = "/trajectory") -> None:

"""Draw the TCP path as connected segments with a blue→red hue gradient."""

if len(path) < 2:

return

segments = [[path[i], path[i + 1]] for i in range(len(path) - 1)]

n = max(1, len(segments) - 1)

colors = [

[int(c * 255) for c in colorsys.hsv_to_rgb((1.0 - i / n) * (240.0 / 360.0), 1.0, 1.0)]

for i in range(len(segments))

]

rr.log(entity, rr.LineStrips3D(segments, colors=colors, radii=0.003))

def main():

"""Trace a YZ-plane circle around the KUKA's home TCP pose, visualized in rerun."""

# Frequency to update the visualization (Hz)

hz = 30

dt = 1.0 / hz

# Radius of the circle to trace (meters)

radius = 0.5

n_steps = 200

# Create robot

robot = kuka.KukaKR1502()

# Initialize rerun and log static meshes

rr.init(f"telekinesis_synapse_{type(robot).__name__}", spawn=True)

visualize_robot(robot, static_meshes=True)

time.sleep(2.0)

# Get home pose (default configuration)

home_pose = robot.get_cartesian_pose()

logger.info(f"Tracing circle of radius {radius:.3f} m in YZ plane ({n_steps} steps)")

# Robot motion: draw circle in YZ plane, visualize robot and TCP path

path: list[list[float]] = []

for step in range(n_steps + 1):

theta = 2.0 * np.pi * step / n_steps

# Circle in the YZ plane, offset so it "kisses" the home pose at theta=0.

pose = home_pose.copy()

pose[1] = home_pose[1] + radius * np.cos(theta) - radius

pose[2] = home_pose[2] + radius * np.sin(theta)

# Move the robot

try:

robot.set_cartesian_pose(pose)

except ValueError:

continue # outside reach / joint limits

# Visualize robot and path

visualize_robot(robot)

actual = robot.get_cartesian_pose()

path.append([float(actual[0]), float(actual[1]), float(actual[2])])

visualize_path(path)

# Sleep to maintain a consistent visualization rate.

time.sleep(dt)

if __name__ == "__main__":

main()"""

Telekinesis quickstart: drive a Yaskawa Motoman robot along a YZ-plane circle via Cartesian pose targets.

No Hardware Required - runs entirely in software with live visualization in Rerun.

Traces a closed circle of radius 0.10m in the YZ plane around the home TCP pose. The TCP

path is drawn live as a connected line with a hue gradient (older

segments blue, newest red).

Install:

pip install rerun-sdk==0.31 # tested on 0.31

Run:

python examples/py/github_examples/motoman_set_cartesian_pose_with_visualization.py

"""

import colorsys

import time

import numpy as np

import rerun as rr

from loguru import logger

from telekinesis.synapse.robots.manipulators import motoman

def visualize_robot(robot, static_meshes: bool = False) -> None:

"""Log per-link transforms to rerun, plus the static meshes on the first call."""

# Log static meshes once

if static_meshes:

for link, m in robot.get_visual_meshes_data().items():

if m["vertices"] is None:

continue

kwargs: dict = {

"vertex_positions": m["vertices"],

"triangle_indices": m["triangles"],

"vertex_normals": m["vertex_normals"],

}

if m["vertex_colors"] is not None:

kwargs["vertex_colors"] = m["vertex_colors"]

else:

kwargs["albedo_factor"] = m["color"] or [179, 179, 179]

rr.log(f"/robot/{link}", rr.Mesh3D(**kwargs), static=True)

# Log per-link transforms on every update

for link, T in robot.get_visual_mesh_transforms().items():

rr.log(f"/robot/{link}", rr.Transform3D(translation=T[:3, 3], mat3x3=T[:3, :3]))

def visualize_path(path: list[list[float]], entity: str = "/trajectory") -> None:

"""Draw the TCP path as connected segments with a blue→red hue gradient."""

if len(path) < 2:

return

segments = [[path[i], path[i + 1]] for i in range(len(path) - 1)]

n = max(1, len(segments) - 1)

colors = [

[int(c * 255) for c in colorsys.hsv_to_rgb((1.0 - i / n) * (240.0 / 360.0), 1.0, 1.0)]

for i in range(len(segments))

]

rr.log(entity, rr.LineStrips3D(segments, colors=colors, radii=0.003))

def main():

"""Trace a YZ-plane circle around the Motoman's home TCP pose, visualized in rerun."""

# Frequency to update the visualization (Hz)

hz = 30

dt = 1.0 / hz

# Radius of the circle to trace (meters)

radius = 0.10

n_steps = 200

# Create robot

robot = motoman.MotomanMH5()

# Initialize rerun and log static meshes

rr.init(f"telekinesis_synapse_{type(robot).__name__}", spawn=True)

visualize_robot(robot, static_meshes=True)

time.sleep(2.0)

# Get home pose (default configuration)

home_pose = robot.get_cartesian_pose()

logger.info(f"Tracing circle of radius {radius:.3f} m in YZ plane ({n_steps} steps)")

# Robot motion: draw circle in YZ plane, visualize robot and TCP path

path: list[list[float]] = []

for step in range(n_steps + 1):

theta = 2.0 * np.pi * step / n_steps

# Circle in the YZ plane, offset so it "kisses" the home pose at theta=0.

pose = home_pose.copy()

pose[1] = home_pose[1] + radius * np.cos(theta) - radius

pose[2] = home_pose[2] + radius * np.sin(theta)

# Move the robot

try:

robot.set_cartesian_pose(pose)

except ValueError:

continue # outside reach / joint limits

# Visualize robot and path

visualize_robot(robot)

actual = robot.get_cartesian_pose()

path.append([float(actual[0]), float(actual[1]), float(actual[2])])

visualize_path(path)

# Sleep to maintain a consistent visualization rate.

time.sleep(dt)

if __name__ == "__main__":

main()3. Run it

Run the script for the brand you chose:

python quickstart_set_cartesian_pose_<brand>.pyA Rerun window will open with the manipulator rendered in 3D. The arm traces a closed circle in the YZ plane around its home pose, and the TCP path is drawn live as a connected line with a hue gradient (older segments blue, newest red). If you see the robot smoothly trace the circle, Synapse's offline kinematics and set_cartesian_pose pipeline are working correctly.

Move a real robot

This starter works entirely offline. To drive a physical robot, wrap the motion loop with robot.connect(ip=...) and robot.disconnect() - the same Skill call then commands hardware.

Safety first!

A real robot will faithfully do whatever you ask of it - so please take a moment to clear the workspace, keep an E-Stop within reach, and be ready to disconnect.

Operating real hardware is at your own risk.

For more Synapse starters, check out the Synapse Quickstart.

Try It Now: Vision to Robot Pose (Application)

This starter chains four modules into a single vision-to-pose pipeline: capture a frame from a webcam (Medulla), segment the foreground object (Cornea), project its centroid into 3D (Pupil), then solve inverse kinematics so a UR5 reaches that point (Synapse). Use it as a template for wiring real perception into real robot pose.

Hardware + Synapse setup required

You need a USB webcam (a built-in laptop camera works) and the one-time Synapse setup from the Set Cartesian Pose starter - telekinesis-urdfs.

Show install, script, and run

1. Install the runtime dependencies

In your active telekinesis environment, install the Synapse and Medulla additionals and the visualization dependencies:

Ensure one-time Synapse setup from the Set Cartesian Pose starter - telekinesis-urdfs is done.

pip install "telekinesis-ai[synapse, medulla]"

pip install rerun-sdk==0.31.22. Save the script

Create a file called quickstart_vision_to_robot_pose_example.py anywhere on your machine and paste the following:

"""Telekinesis quickstart: vision-to-pose end-to-end pipeline.

Captures a webcam frame, segments the foreground with BiRefNet, projects

the object centroid into the camera frame, transforms it into the robot

base frame, and solves inverse kinematics for a UR5 to reach it.

Also logs the main pipeline outputs to Rerun for visualization.

Replace the webcam input and camera/robot transforms with your own for real-world use.

Run as a script - python quickstart_vision_to_robot_pose_example.py

"""

import numpy as np

from loguru import logger

import rerun as rr

from telekinesis import cornea, pupil

from telekinesis.medulla.cameras import webcam

from telekinesis.synapse.robots.manipulators import universal_robots

def main() -> None:

# Initialize Rerun.

rr.init("telekinesis_vision_to_pose_quickstart", spawn=True)

# Create a webcam object and a UR5 robot model.

camera = webcam.Webcam(name="my_webcam_camera", camera_id=0)

robot = universal_robots.UniversalRobotsUR5()

# Medulla Skill: Connect to the camera, capture one image, then disconnect.

camera.connect()

image = camera.capture_color_image()

camera.disconnect()

# Visualize input image.

rr.log("vision/input_image", rr.Image(image))

# Cornea Skill: Segment the foreground object from the captured image.

result = cornea.segment_image_using_foreground_birefnet(

image=image,

mask_threshold=125,

)

# Extract the segmentation mask from the result.

mask = result.to_dict()["labeled_mask"]

# Visualize foreground segmentation.

rr.log(

"vision/foreground_segmentation",

rr.SegmentationImage(mask.astype(np.uint8)),

)

# Pupil Skill: Calculate the center of the detected object in image pixel coordinates.

centroid = pupil.calculate_image_centroid(mask=mask)

centroid_np = centroid.to_numpy()

# Visualize centroid on the image.

rr.log(

"vision/centroid",

rr.Points2D(

positions=[centroid_np[:2]],

radii=8,

labels=["centroid"],

),

)

# Example depth value in meters.

# Update Me! 1

depth = 0.40

# Example camera intrinsic matrix.

# Update Me! 2

camera_intrinsics = np.asarray(

[

[600.0, 0.0, image.shape[1] / 2.0],

[0.0, 600.0, image.shape[0] / 2.0],

[0.0, 0.0, 1.0],

]

)

# Example distortion coefficients.

# Update Me! 3

distortion_coefficients = np.zeros(5)

# Pupil Skill: Project the 2D image centroid into a 3D point in the camera frame.

camera_T_point = pupil.project_pixel_to_camera_point(

camera_intrinsics=camera_intrinsics,

distortion_coefficients=distortion_coefficients,

pixel=centroid,

depth=depth,

)

# Convert the Telekinesis Mat4X4 object into a NumPy 4x4 matrix.

camera_T_point_np = camera_T_point.to_numpy()

# Extract 3D point in camera frame.

camera_point_position = camera_T_point_np[:3, 3]

# Visualize projected 3D camera point.

rr.log(

"scene/camera_point",

rr.Points3D(

positions=[camera_point_position],

radii=0.01,

labels=["camera_point"],

),

)

# Example transform from the robot base frame to the camera frame.

robot_T_camera = np.eye(4)

# Update Me! 4

robot_T_camera[:3, 3] = np.asarray([0.30, 0.00, 0.20])

# Transform the point from the camera frame into the robot base frame.

robot_T_point = robot_T_camera @ camera_T_point_np

# Extract robot target position.

robot_point_position = robot_T_point[:3, 3]

# Visualize robot target pose.

rr.log(

"scene/robot_target_pose",

rr.Transform3D(

translation=robot_point_position,

mat3x3=robot_T_point[:3, :3],

),

)

# Build the target pose for inverse kinematics. Orientation is Euler XYZ in degrees.

target_pose = [

robot_T_point[0, 3],

robot_T_point[1, 3],

robot_T_point[2, 3],

0.0,

180.0,

0.0,

]

# Initial joint configuration for the IK solver (degrees).

q_init = np.asarray([0.0, -90.0, -90.0, 0.0, 90.0, 0.0])

# Set the default joint configuration of the robot

robot.set_default_joint_configuration(value=q_init)

# Synapse Skill: Move to target Cartesian Pose

robot.set_cartesian_pose(

cartesian_pose=target_pose,

speed=0.1,

acceleration=0.1

)

logger.info(f"Centroid pixel: {centroid_np}")

logger.info(f"Camera point: {camera_T_point_np}")

logger.info(f"Target pose: {target_pose}")

if __name__ == "__main__":

main()3. Run it

python quickstart_vision_to_robot_pose_example.pyYou should see the centroid pixel, the 3D camera point, the target pose, and the IK joint solution printed in your terminal. If all four lines log without errors, your full vision-to-robot-pose stack - Medulla, Cornea, Pupil, and Synapse - is wired up correctly.

Replace the placeholders with calibrated values

The depth, camera intrinsics, distortion coefficients, and robot_T_camera transform are placeholders(commented as # Update Me!) so the script runs out of the box. For real workloads, swap them for values from your camera calibration and hand-eye calibration.

Stay in the Loop

Tried a starter? Two great places to keep exploring - get help and ideas from the community on Discord, or browse every Skill source-side before you decide what to clone.

telekinesis-examples before cloning — full source, sample data, and READMEs. Run the Full Examples Library

Once you've verified your install with one of the inline starters above, the telekinesis-examples repository gives you dozens more pre-built Skills across vision, robotics, and 3D - each runnable with a single command.

Requires

gitinstalled- Roughly 1.5 GB of free disk space (the repository pulls a sample-data submodule).

Show clone, install, and run steps

1. Clone the examples repository

Clone the telekinesis-examples repository together with its sample-data submodule:

git clone --depth 1 --recurse-submodules --shallow-submodules \

https://github.com/telekinesis-ai/telekinesis-examples.git

cd telekinesis-examplesWhat gets downloaded?

The flags above keep the clone small:

--depth 1fetches only the latest commit, not full history.--recurse-submodules --shallow-submodulesalso pulls thetelekinesis-datasubmodule (sample images, point clouds, robot states), again atdepth 1.

When you start using Telekinesis on your own data, you can ignore the telekinesis-data submodule - it exists purely to make the examples runnable out of the box.

2. Install example dependencies

The core Telekinesis Skill Library package (telekinesis-ai) is intentionally lean. The examples additionally use SciPy, Rerun for visualization, and pycocotools for COCO-format helpers.

Make sure your telekinesis environment from the Installation guide is active, then install everything in one go:

conda activate telekinesis

pip install rerun-sdk==0.31.2 pycocotools scipy3. Run your first examples

Each module ships with a runner script - cornea_examples.py, retina_examples.py, pupil_examples.py, and so on. You select an example by passing its name to --example. Try the two starters below.

Starter 1: Segment an image with SAM

This runs segment_image_using_sam from the Cornea (segmentation) module. It loads a sample image, runs the Segment Anything Model on it, and visualizes the resulting masks in Rerun.

python examples/cornea_examples.py --example segment_image_using_samA Rerun window will open showing the input image and the predicted segmentation masks side by side.

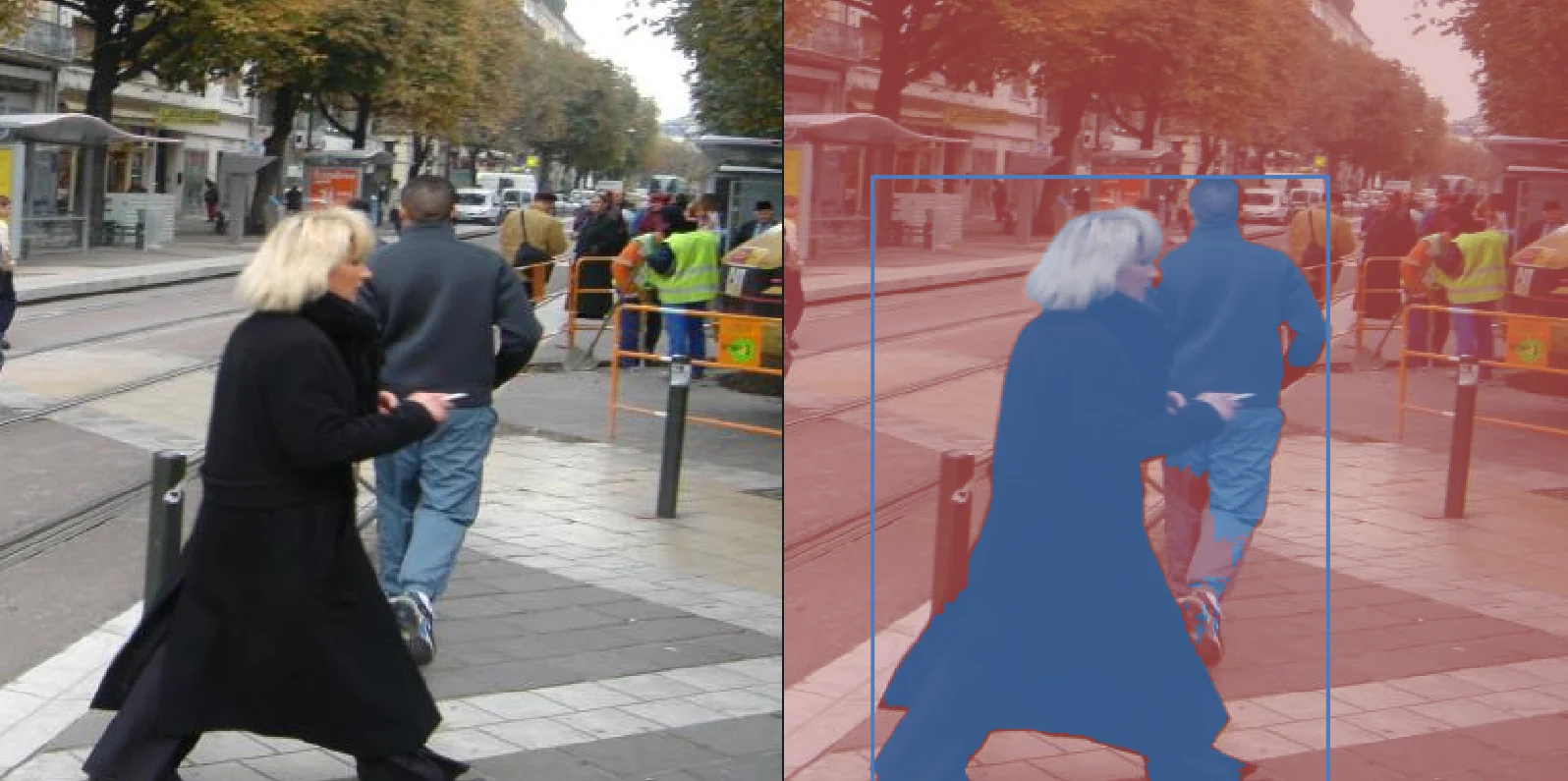

Input → Masked Output

Starter 2: Detect objects with RF-DETR

This runs detect_objects_using_rfdetr from the Retina (object detection) module. It loads a warehouse image, runs the transformer-based RF-DETR detector, and overlays the detected COCO-class bounding boxes in Rerun.

python examples/retina_examples.py --example detect_objects_using_rfdetrYou should see annotated bounding boxes with class labels (person, forklift, box, …) and confidence scores drawn over the input image.

Input → Detected Objects

What Just Happened?

Each example you just ran follows the same pattern, and this is the pattern you'll reuse when writing your own Telekinesis programs:

- Load data into a strongly-typed structure - the runner reads an image from disk into a

datatypes.Image(or a point cloud intodatatypes.PointCloud, etc.). Every Skill in the Skill Library consumes and produces these typed objects, so they compose cleanly. - Call a Skill - a single, well-named function such as

cornea.segment_image_using_sam(...),vitreous.filter_point_cloud_using_voxel_downsampling(...), orrobot.forward_kinematics(...). Skills are pure functions: typed inputs in, typed outputs out. - Visualize the result with Rerun - the runner forwards the inputs and outputs to a Rerun viewer so you can inspect the result interactively. This is optional in your own code but extremely useful while developing.

telekinesis package and function: parameters, return types, and links into the implementation source. Explore More Examples

Each module has a runner that supports --list to print every available example.

python examples/cornea_examples.py --listSample output:

Available examples:

- filter_segments_by_area

- filter_segments_by_color

- segment_image_using_adaptive_threshold

- segment_image_using_felzenszwalb

- segment_image_using_grab_cut

- segment_image_using_otsu_threshold

- segment_image_using_sam

- segment_image_using_slic_superpixel

- segment_image_using_watershed

- ...Run any of them with:

python examples/cornea_examples.py --example <name_of_example>Available example runners

| Module | What it covers | Runner |

|---|---|---|

| Cornea | 2D image segmentation (classical + SAM) | examples/cornea_examples.py |

| Retina | 2D object detection (YOLOX, RF-DETR, Grounding DINO, Qwen) | examples/retina_examples.py |

| Pupil | Image processing primitives (filtering, geometry, math) | examples/pupil_examples.py |

| Vitreous | 3D point cloud processing (filtering, registration, segmentation) | examples/vitreous_examples.py |

| Datatypes | Working with the canonical Telekinesis data types directly | examples/datatypes_examples.py |

| Medulla | Medulla hardware communication skills for 2D and 3D cameras (Webcam, IDS, Intel RealSense) | examples/medulla/<vendor>/<script>.py |

| Synapse | Motion planning, kinematics, and control across industrial, mobile, and humanoid robots | Coming soon... |

For Cornea, Retina, Pupil, Vitreous, pass --list to any runner to discover its examples, then --example <name> to run one.

Source links

Troubleshooting

A few things that occasionally trip people up on first run:

pipcannot installtelekinesis-ai/ “No matching distribution found” - the SDK supports Python 3.11 and 3.12 only. Check withpython --version; if you are on another release, create a new environment. See Step 3 of the Installation guide.ModuleNotFoundError: telekinesis- yourtelekinesisconda environment is not active. Runconda activate telekinesisand re-run the example.Authentication errors /

401 Unauthorized-TELEKINESIS_API_KEYis not set in the current shell. Verify withecho $TELEKINESIS_API_KEY(macOS/Linux) orecho $env:TELEKINESIS_API_KEY(Windows). See Step 2 of the Installation guide.Rerun window does not open - make sure you installed the pinned version:

pip install rerun-sdk==0.31.2. Try again, as sometimes, it could get blocked the first time. Else see the Rerun docs for more information.

Where To Go Next

Now that you have run your first Skills end-to-end, here are the natural next steps depending on what you want to build.