Detect Silver Glasses from Webcam Image

This example demonstrates how to use Tzara, the Telekinesis Physical AI Agent, to build a computer vision pipeline that detects silver glasses from a webcam feed. Tzara generates code that captures an image from the webcam using OpenCV, runs a pre-trained Qwen VLM model for object detection, and outputs bounding boxes and visualizations with Rerun.

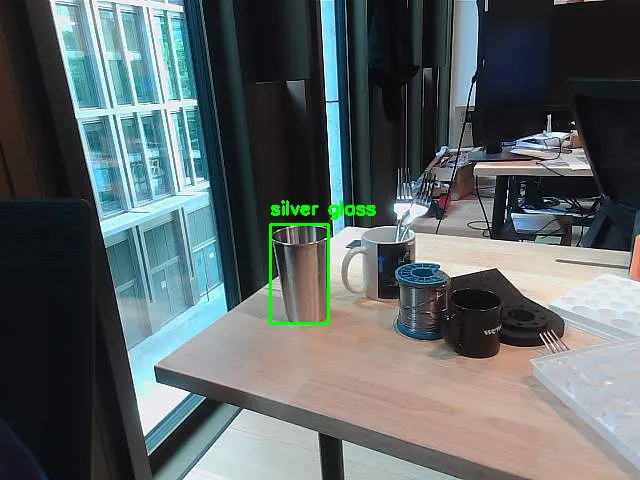

Silver glasses detected with Qwen VLM

The Natural Language Instruction

The natural language instruction for this task is:

Capture an image and detect the silver glasses in the image and visualize using OpenCVThe Generated Code

The generated code captures an image from the webcam, detects silver glasses using Qwen VLM, and prints the bounding boxes and confidence scores for each detected pair of glasses.

python

# Pipeline: Capture image from webcam, detect silver glasses, visualize detections

# Steps:

# 1. Import required modules

# 2. Connect to webcam and capture a frame

# 3. Detect objects using YOLOX (or Qwen VLM for specific object like "silver glasses")

# 4. Visualize the detections on the image

import numpy as np

import cv2

from datatypes import datatypes

from telekinesis.medulla.cameras.webcam import Webcam

from telekinesis import retina, pupil

webcam = Webcam(name="main_webcam", camera_id=0)

webcam.connect()

try:

# Step 1: Capture a single color frame from the webcam

frame = webcam.capture_color_image()

# Step 2: Wrap the captured frame in a datatypes.Image

image = datatypes.Image(image=frame)

# Step 3: Use Qwen VLM to detect "silver glasses"

annotations = retina.detect_objects_using_qwen(

image=image,

prompt="silver glass"

)

# Step 4: Visualize the detections

vis_frame = frame.copy()

for ann in annotations.annotations:

bbox = ann.get("bbox", [0, 0, 0, 0])

x, y, w, h = int(bbox[0]), int(bbox[1]), int(bbox[2]), int(bbox[3])

score = ann.get("score", None)

cv2.rectangle(vis_frame, (x, y), (x + w, y + h), (0, 255, 0), 2)

label = "silver glasses"

if score is not None:

label = f"{label}: {score:.2f}"

cv2.putText(vis_frame, label, (x, max(y - 10, 0)),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, (0, 255, 0), 2)

# Step 5: Display and save the result

vis_bgr = cv2.cvtColor(vis_frame, cv2.COLOR_RGB2BGR)

cv2.imshow("Silver Glasses Detection", vis_bgr)

cv2.waitKey(0)

output_path = "silver_glasses_detection.png"

cv2.imwrite(output_path, vis_bgr)

print(f"Detection result saved to: {output_path}")

print(f"Number of detections: {len(annotations.annotations)}")

finally:

webcam.disconnect()

cv2.destroyAllWindows()